San Francisco, California Apr 9, 2026 (Issuewire.com) - Nanonets has released OCR-3, the latest model in their family of visual language models (VLMs) specialized for OCR and document understanding tasks. It is specifically built for agents, RAG, and other LLM-based use cases.

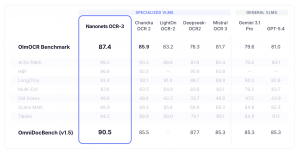

Based on benchmarks, it is the most accurate OCR model today.

-

93.1 on OLM-OCR (Global #1)

-

85.9 on IDP Leaderboard (Global #1)

-

90.5 on OmniDocBench

The team also released scores on domain-specific document benchmarks:

-

94.5% on FinanceBench (dense SEC 10-K filings)

-

96.0% on DocBench Legal (multi-column court filings)

-

90.1% on HealthcareBench (clinical notes and forms)

OCR-3 provides bounding boxes and confidence scores as metadata in its outputs, two features that are sorely missed in document pipelines built on foundational models or other VLMs.

-

Confidence scores: Every extraction includes a reliability score. You can pass high-confidence outputs directly, and route low-confidence outputs to a human or a larger model.

-

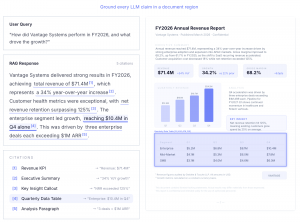

Bounding boxes: The model outputs exact spatial coordinates for every element. These are useful for RAG citations, feeding agents precise document regions, source highlighting, and observability in the agentic stack.

Additionally, the model natively supports visual question-answering with answers backed by source evidence from documents. It also provides an RAG mode that converts documents into chunks of structured markdown optimized for RAG applications and agents.

The model API exposes five endpoints:

-

/parse - returns structured markdown with exact reading order.

-

/extract - returns a type-safe object based on your custom schema.

-

/split - separates large batches of mixed documents.

-

/chunk - creates context-aware document chunks for RAG.

-

/vqa - returns grounded answers with exact source regions.

Nanonets OCR-3 is a 35-billion parameter Mixture-of-Experts (MoE) model, which makes it twice as fast as OCR-2. It is trained on a dataset of 11 million documents.

Developers can access the OCR-3 model API starting today.

Media Contact

Nanonets *****@nanonets.com https://nanonets.com